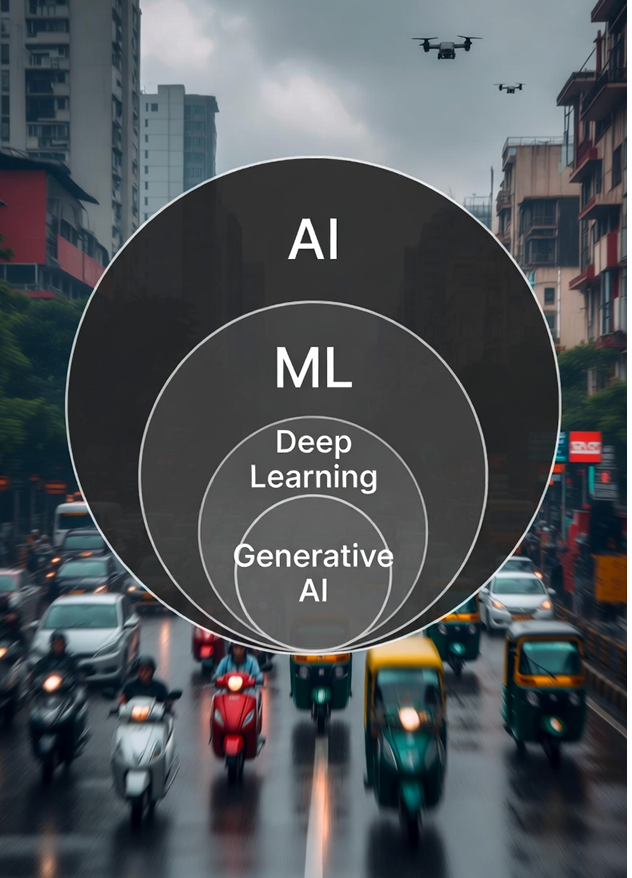

From AI to Gen AI : One Big Nested Family Explained

Here’s a clear, step-by-step explanation of AI, ML, Deep Learning, and Gen AI (as of 2026). These are not competing technologies – they form a nested hierarchy.

AI is the biggest circle → everything else lives inside it.

The Hierarchy (2026 View)

- Artificial Intelligence (AI)

→ The broadest field: creating machines/systems that can perform tasks that normally require human intelligence (reasoning, understanding, learning, perception, decision-making, creativity, etc.). - Machine Learning (ML)

→ A major subset of AI

→ Instead of hard-coding every rule, the system learches patterns from data and improves automatically with experience. - Deep Learning (DL)

→ A powerful subset of ML

→ Uses artificial neural networks with many layers (deep) to automatically learn very complex patterns from raw data (images, audio, text, etc.). - Generative AI (Gen AI / GenAI)

→ The hottest subset of Deep Learning (especially since ~2022–2023 explosion)

→ Focuses on creating new content (text, images, video, code, music, 3D models…) instead of just classifying or predicting.

Quick Comparison Table (2026 perspective)

| Term | Scope / Level | Core Idea | Learns from data? | Needs huge data? | Typical tasks / outputs | Famous examples (2025–2026) | Main technique / architecture |

|---|---|---|---|---|---|---|---|

| AI | Broadest field | Machines that act intelligently | Sometimes | Varies | Anything intelligent (planning, robotics, games, assistants…) | Chess engines, rule-based systems, modern LLMs | Rules, search, ML, symbolic AI, etc. |

| ML | Subset of AI | Learn from data, improve automatically | Yes | Medium | Classification, regression, clustering, recommendations | Spam filter, Netflix recs, fraud detection | Decision trees, SVM, random forests, logistic regression, shallow nets |

| Deep Learning | Subset of ML | Very deep neural networks | Yes | Very large | Image recognition, speech, translation, complex pattern finding | Face ID, Google Translate (modern), self-driving perception | CNNs, RNNs/LSTMs, Transformers (early) |

| Gen AI | Subset of Deep Learning | Create new realistic content | Yes | Extremely large | Text generation, image gen, video, music, code, synthetic data | ChatGPT, Grok, Claude, Gemini, Midjourney, Flux, Sora, Suno, Kling | Transformers + Diffusion + large-scale autoregressive / foundation models |

Simple Real-World Analogy (Pune Traffic Style 😄)

Imagine teaching someone to ride a scooter in Pune traffic:

- AI = “Become a good driver overall” (the big goal – handle traffic, signals, pedestrians, rain, potholes, cows…)

- ML = “Learn by watching thousands of rides and gradually getting better” (instead of someone telling you every single rule)

- Deep Learning = “Your brain builds very deep internal understanding – recognizes patterns like ‘that autorickshaw is about to cut in’ without being explicitly taught each case”

- Gen AI = “Now you can imagine and describe completely new traffic situations you’ve never seen – ‘a flying scooter dodging drones in MG Road jam at 8 PM’ – and even draw it or write a funny story about it”

One-line Summary (most people remember this)

- AI is the dream

- ML is how most of the dream is achieved today

- Deep Learning is what made the recent AI explosion possible

- Gen AI is what made everyone say “wow, AI can now create like humans” since late 2022

All four are connected – almost every impressive Gen AI product in 2026 (Grok, Gemini, Claude, Flux, etc.) is Deep Learning + huge scale + clever training tricks running under the hood.